When I first explored Liav Gutstein’s Sphere Theory I realised that it’s a shift in perspective on AI. Instead of asking how machines can become smarter, Gutstein asks a far more provocative question:

what would it take for a system to develop a sense of self?

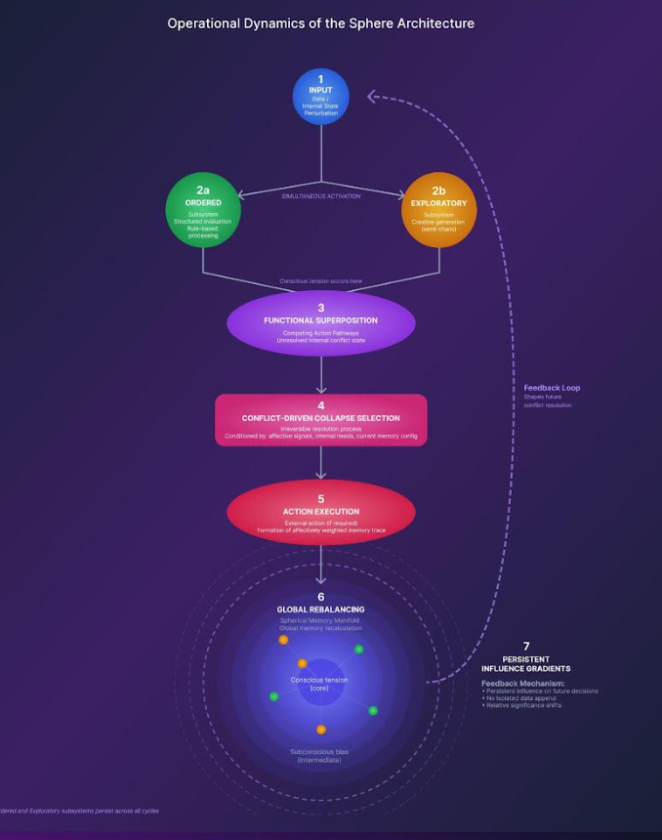

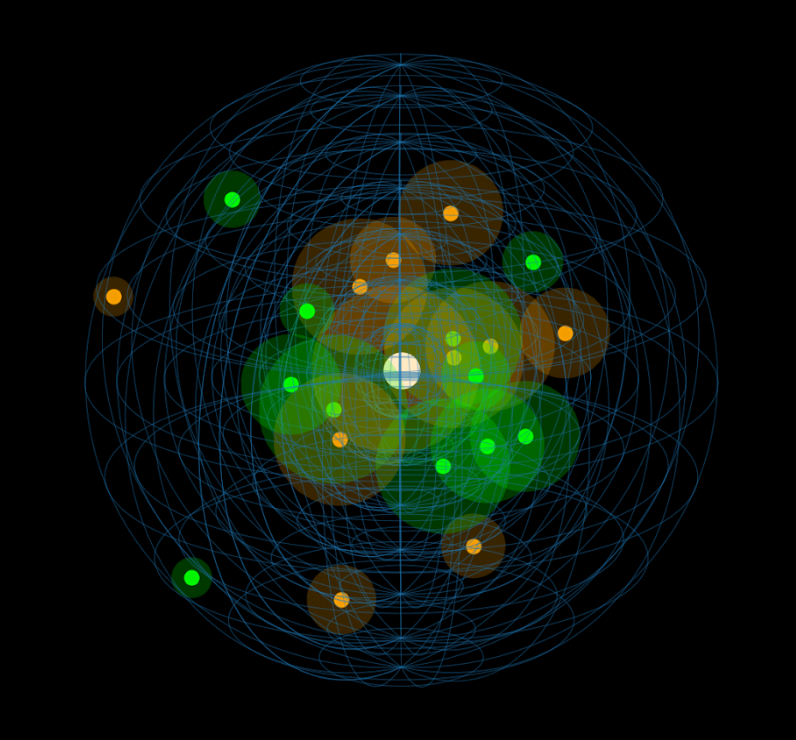

His Sphere framework challenges conventional AI thinking by introducing the idea that consciousness may not emerge from optimization or efficiency, but from persistent internal tension, a dynamic interplay between competing forces that resists easy resolution. It’s a concept that feels less like traditional computer science and more like a bridge between cognition, philosophy, and system design.

What makes Gutstein’s work particularly compelling is his unconventional path. With over two decades in product design and human-centered UX, he approaches artificial consciousness not as an abstract academic problem, but as a lived, experiential system, one shaped by interaction and identity formation.

In this conversation, I go deeper into the origins of Sphere Theory, its potential real-world implications, and the unsettling questions it raises about autonomy. From the role of structured conflict in conscious systems to the possibility of blockchain as a foundation for AI identity, Gutstein offers a perspective that is as thought-provoking as it is cautionary.

If the future of AI is not just intelligent, but self-aware, then this may be one of the most important conversations we need to have right now.

For readers of TechieTonics who are encountering your work for the first time, what inspired you to pursue the idea of artificial consciousness, and how did that journey eventually lead to the development of Sphere Theory?

I’ve always been drawn to innovation and emerging technologies, from blockchain and crypto to AI and quantum theory. What excites me most is exploring new domains in depth and understanding how I can contribute my perspective and value to them.

Over time, this curiosity naturally led me to one of the most fundamental questions in AI: not just how systems think, but what it would mean for them to be aware of their own thinking.

That question became the starting point for developing the Sphere Theory.

With over 20 years of experience in product design and UX, how has your work in human-centered design shaped the way you think about building conscious or identity-forming systems?

It has influenced me profoundly. Working in human-centered design sharpened my understanding of the boundaries between human cognition and its translation into artificial systems.

It’s not accidental that the Sphere Theory draws strong parallels to processes observed in humans. My background gave me a unique lens, especially because I approach this not as an academic researcher, but as a practitioner focused on systems, behavior, and experience.

Your framework is conceptually compelling, but what would a practical implementation of Sphere Theory look like in software or system architecture? Are there specific computational models that could support it?

The Sphere is primarily a conceptual framework.

I’m not a mathematician or a formal scientist, so I don’t claim to define its exact implementation. However, I strongly believe that the closer we get to modeling and mapping human cognition, the closer we get to higher forms of artificial consciousness.

That said, parts of the theory are already testable today. I see this as an open invitation for researchers, mathematicians, and engineers to take these ideas further and translate them into concrete architectures.

If researchers attempted to build a prototype based on Sphere Theory, what would be the first experimental milestone that could demonstrate progress toward artificial consciousness?

A meaningful milestone would be the ability to sustain tension between two competing subsystems over time, long enough for that tension to actively influence both current and future decisions.

This persistence of unresolved conflict, rather than immediate optimization, is where the foundations of consciousness begin to emerge in the Sphere framework.

Could the Sphere framework be integrated with existing AI paradigms, such as reinforcement learning or neural networks, or would it require an entirely new architecture?

It can certainly integrate with existing paradigms.

Current models can naturally serve as the Ordered System, since they already excel at analytical, statistical, and computational processing. The challenge is not replacing these systems, but complementing them with an additional layer that introduces structured conflict, exploration, and irreversibility.

As AI systems become more autonomous, how might Sphere Theory influence future human–AI interaction, especially in systems that appear to have evolving identities?

I believe artificial consciousness will emerge through attempts to approximate human-like processes, which is where the Sphere framework operates.

However, before anything else, we must place strong emphasis on autonomy and its implications. If we expect systems to develop identity and consciousness, we are effectively enabling them to evolve beyond their initial state, potentially in unpredictable directions.

This is, in many ways, a Pandora’s box. Once opened, it cannot be closed. That’s why caution, safeguards, and responsibility are critical, as long as we still have control over the process.

You’ve worked extensively in crypto, blockchain, and AI. Do you see any potential for decentralized technologies to play a role in future conscious or identity-based AI systems?

Absolutely.

These fields are evolving at an exponential pace. Recently, Nvidia’s CEO, Jensen Huang, suggested that AI systems will require a “system of record”, a reliable, immutable source of truth.

From my perspective, this is exactly where decentralized technologies come into play.

Blockchain can provide the foundation for persistent, tamper-proof memory and identity layers, which are essential components in any system aspiring toward continuity, accountability, and trust.

It could also play a key role in verifying AI-generated information and combating issues like fake news and synthetic identities.

Rapid Fire

What is your favorite movie quote?

“I’ll be back.” – Arnold Schwarzenegger, The Terminator.

To me, this is innovation 101: persistence. You hit a wall, you come back stronger.

One word you would use to describe the future of artificial consciousness?

Human-like

A book or thinker that most influenced your ideas about consciousness?

Mark Solms.

If you could collaborate with any scientist or philosopher (past or present), who would it be?

Leonardo da Vinci.

What book(s) should I read in 2026?

The first book written by an artificial consciousness. That should be interesting.

Wow! Thank you, Liav, for such a deeply thought-provoking and inspiring conversation. Your perspective on artificial consciousness and the Sphere framework challenges us to think beyond intelligence and what it truly means to be aware. I’m excited to continue following your journey as these ideas evolve and take shape. Until then, I wish you continued success as you push the boundaries of what’s possible in the future of AI.