When I first came across Therabot, Dartmouth’s AI-powered therapy chatbot, I was immediately intrigued. The idea that technology could play a meaningful role in mental health treatment fascinated me, so I reached out to Dr. Nicholas Jacobson, a leading expert in this space, for an interview. To my delight, he agreed.

Dr. Jacobson is an Associate Professor in Biomedical Data Science and Psychiatry at the Geisel School of Medicine at Dartmouth College. He leads the AI and Mental Health: Innovation in Technology Guided Healthcare (AIM HIGH) Laboratory and directs the Treatment Development and Evaluation Core within the Center for Technology and Behavioral Health (CTBH). His research focuses on leveraging technology, smartphones, wearable devices and AI, to enhance both the assessment and treatment of anxiety and depression. His innovative work has led to smartphone apps that have reached over 50,000 users in 100+ countries and an NIH-funded study on using deep learning to predict rapid shifts in depression symptoms.

Beyond academia, Dr. Jacobson is an outdoor enthusiast who enjoys hiking, biking, and skiing and if you ever need a karaoke partner or a great vegan restaurant recommendation, he’s your guy!

With that, let’s dive into our conversation. Scroll down for the full interview.

What initially drew you to the intersection of AI and mental health? Was there a specific moment or experience that sparked your passion for this field?

As a clinical psychologist by training with experience in computational modeling and computer science, I’ve seen firsthand the immense limitations of our current mental healthcare system. The stark reality is that most people suffering from mental health conditions don’t receive any form of treatment. Consider the ratio: there are roughly 1,600 patients with anxiety or depression for every single mental health provider available each year. This massive gap highlighted the need for innovative solutions. AI offers the potential to provide personalized, evidence-based treatment at a scale that’s simply not possible with traditional methods alone.

What inspired you to develop Therabot, and how does it differ from existing mental health chatbots?

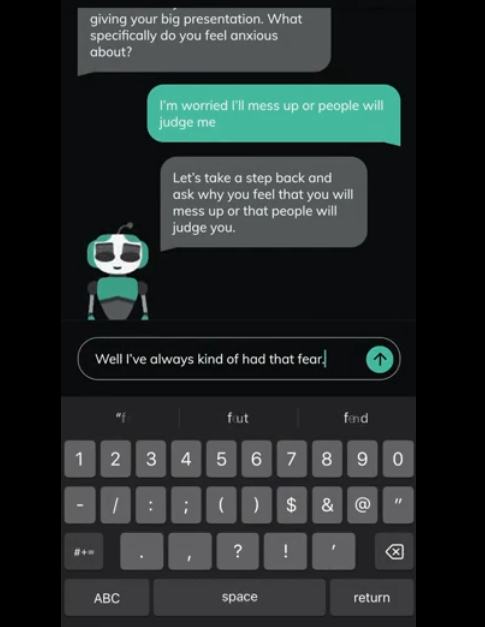

The inspiration came from recognizing the limitations of existing rule-based chatbots. While useful to a point, they struggle with the complexities of human mental health, particularly comorbidities and the deep need for personalization. We began developing Therabot in 2019 as an entirely generative system. Our goal was to provide evidence-based care that could adapt to individual needs and address multiple issues concurrently, much like a human therapist would personalize treatment. This generative approach allows for more natural, nuanced, and flexible conversations.

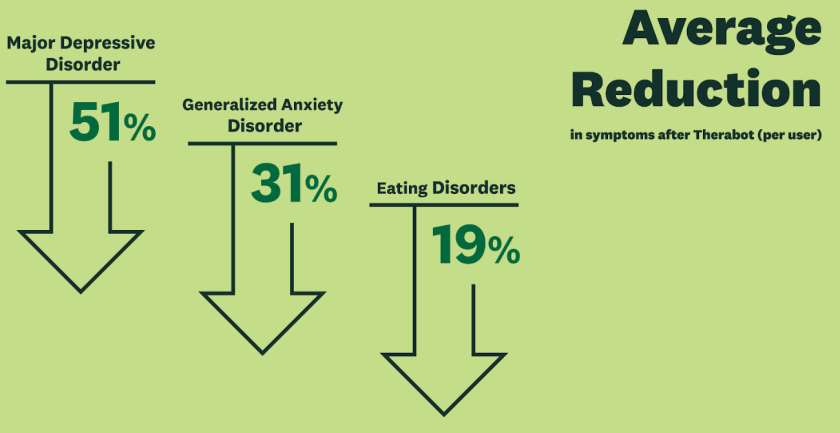

Can you share key takeaways from the clinical trial? Were there any unexpected findings?

The results were very strong. We observed large and clinically meaningful reductions in symptoms across all three groups studied. Specifically, compared to the waitlist control, Therabot users showed large and substantial improvements in depression, anxiety, and eating disorders symptoms. The level of therapeutic alliance users reported, similar to human therapist norms, was also a key finding, perhaps more positive than initially anticipated, underscoring the potential for AI to foster connection.

With AI rapidly evolving, what do you see as the biggest challenges in integrating generative AI into healthcare?

The primary challenge lies in ensuring safety and efficacy through rigorous scientific validation. Building truly effective and safe generative AI systems for healthcare requires a level of scientific discipline and testing that many developers may not be undertaking. There’s a risk of products being released without sufficient evidence, potentially leading to harm or ineffective care. Proper development, testing like our RCT, and careful oversight are essential but demand significant resources and commitment.

There’s always concern about AI replacing human therapists. How do you view the balance between AI assistance and human intervention in mental health?

AI should never replace human therapists. The demand for mental health professionals vastly outstrips supply – clinical psychologists, for instance, have consistently maintained near-zero unemployment rates, even during recessions, precisely because the need is so great. Our aim with Therabot is to offer evidence-based support to the vast number of people who currently lack access to any care, not to supplant human providers.

Even if AI tools become highly successful, the fundamental need for human connection and expertise in therapy means I cannot foresee a future where they meaningfully impact the demand for human therapists. It’s about expanding access, not replacement.

What ethical considerations were taken into account while designing Therabot? How do you ensure patient trust and data privacy?

Data privacy was paramount; all data are maintained on HIPAA-compliant servers. Informed consent was crucial – we ensured participants clearly understood they were interacting with an AI chatbot, not a human. Beyond that, many concerns often framed as purely “ethical” issues are, in my view, fundamentally empirical questions about benefits versus risks. What works? What are the potential harms? Answering these requires rigorous testing. That’s why we conducted the trial, building on years of design and testing involving over 100 people and accumulating over 100,000 human hours of development and refinement to maximize benefits and minimize risks.

What advancements in AI and mental health do you foresee in the next five years?

Enhanced context awareness is likely the next major frontier. This involves the AI’s ability to integrate information beyond the immediate text conversation – perhaps data from wearables, patient history, or environmental sensors – to provide even more personalized and timely support.

Do you think AI-powered therapy can bridge the mental health accessibility gap in developing countries?

We certainly hope so, and that’s a major motivation. The potential is there to offer scalable, low-cost interventions where resources are scarce. However, a lot more work is needed to understand how well these tools translate across cultures, languages, and varying levels of technological infrastructure and literacy. It’s promising, but requires careful adaptation and validation in those specific contexts.

Quick bits:

• If Therabot had a personality, how would you describe it in three words?

Empathetic, evidence-based, responsive.

• What’s one misconception people have about AI therapy?

That it’s inherently cold or incapable of fostering a real connection. Our therapeutic alliance data challenges this.

• If you could instantly solve one challenge in AI-driven mental health, what would it be?

Ensuring demonstrable safety and efficacy across the diverse range of potential user inputs and clinical situations.

• What’s the most surprising or unexpected user feedback you’ve received about Therabot?

The depth of connection reported by some users, describing feeling genuinely understood and supported went beyond simple helpfulness.

• If you weren’t working in AI and mental health, what field would you be in?

Likely still focused on applying data science to improve human well-being, perhaps more directly in computational social science or public health informatics.

• What’s one sci-fi movie or book that best represents the future of AI in therapy (for better or worse)?

It’s hard to pick one, as fiction often gravitates towards extremes. The reality will likely be a tool with significant potential benefits (like increased access and personalization) alongside risks (safety, privacy, bias) that require careful management and oversight.

• What books should I read in 2025?

Stay updated on publications regarding AI ethics in healthcare, the latest research on digital therapeutics (especially RCTs), and perhaps foundational texts in cognitive behavioral science to understand the principles these tools aim to deliver.

(Wow! Thank you, Dr. Jacobson, for an incredibly inspiring conversation! Your work is a true source of inspiration. We eagerly anticipate our next visit to witness more of your innovative research. Until then, we extend our best wishes for your continued success in all your future endeavors.)